What’s Still Missing in AI

Three Things #202: April 4, 2026

Note: My writing workflow is rapidly evolving thanks to AI, and I’ve also been in “builder” mode recently, more focused on shipping code than writing. I’m working through a backlog of articles that I wrote, but didn’t have time to polish or publish, over the past few months. I first wrote this article in December, and while most of it is still accurate and relevant, rereading it now, it’s remarkable how much has changed since then. I’ve left almost the entire original article intact.

It’s been a long time since I last wrote about my experience with AI applications. In some ways, progress over the past year has exceeded my expectations. For instance, AI tools are much better at coding, and at technical tasks in general, than I expected. Of course, in other ways, AI has failed to live up to those expectations. AI agents, for example, still aren’t sufficiently autonomous and can’t really do much of anything without supervision. The jagged frontier of AI abilities continues to both delight and disappoint.

I continue to bring AI tools into new areas of my life, and to rely on AI more and more each day. I crossed a tipping point a few months ago where I realized that, for the first time ever, I’m using these tools every single day. I can’t put my finger on precisely why, but in general they suddenly got really good at the things I need help with. Today, the raw intelligence capabilities are solid, but the tooling is still lacking.

That’s actually very promising! Because, while making AI more intelligent will continue to require major, difficult breakthroughs and paradigm shifts, improving the tooling and UX should be easy by comparison. It mostly just requires the hard work of building good product around this powerful tech, although there are some fundamental, structural issues at work here that may slow things down.

It feels like these fantastically capable tools are so close to being able to truly and overwhelmingly transform my workflow in a positive way, but they’re currently held back by three interrelated failures. They can’t reliably access or remember the right information at the right time (memory, context). They can’t act on the real world (realtime data, interfaces). And they can’t do either safely without exposing everything (privacy).

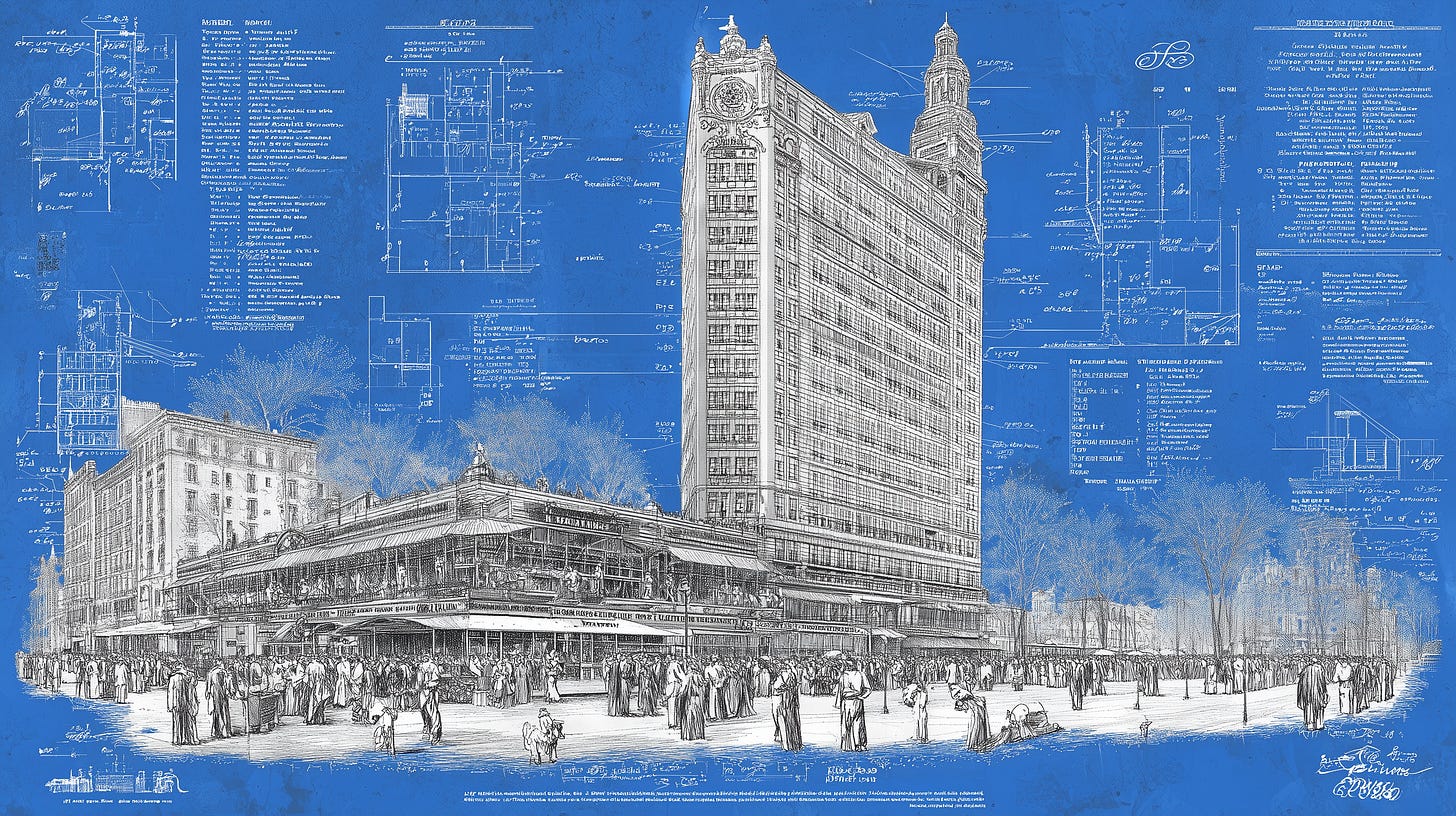

These aren’t three separate problems. They’re three facets of the same problem: AI tools don’t yet have safe, controlled, trusted access to the right information at the right time. We have a genius architect, but we’re trying to build the skyscraper with one hand tied behind our back.

Thing #1: Context 🧩

Context, as the name suggests, is the amount of “working memory” that an AI tool can hold at any given time. It’s all of the transient information supplied at inference time, including the system prompt and instructions, conversation history, documents, instructions, and user data. It contains lots of information that is absent from training, too detailed or private to appear in training, or simply more relevant to the current task than the model’s general pretrained knowledge.

Models themselves have no memory, but to create the illusion of memory, the tool—the application you’re actually interacting with—maintains this context and passes it back to the model as a single, long prompt every time inference is performed. The context window of popular models and tools has continued to increase gradually, and in the best models today support for a context window of 100-200K tokens is fairly common [NOTE: multiple frontier models today support 1M context size]. This means that the model can attend to the equivalent of roughly one whole book, or a small to medium size code repository [a small library, or a large code repository].

That’s sort of simultaneously a lot of context, and also not much context at all. It’s a lot of context because it’s orders of magnitude more “working memory” than most humans can hold in their mind. Most people are barely able to remember 8-10 digit phone numbers, let alone an entire novel with hundreds of pages of text. An AI model can do this with ease.

On the flipside, consider how much information you’d like to store in context. Something I’ve found while using AI tools more and more in my daily workflow is that this is, basically, everything I’ve ever written, everything I’ve ever said or done, and more or less everything I have access to. It’s all of the email I’ve ever read or sent, at least all of my work email from the past few years. It’s every document I’ve created, reviewed, or edited. It’s every text message I’ve sent or received. It’s every page I’ve browsed, query I’ve run, and article I’ve read. It’s a transcript of every meeting I’ve attended (and the ones I missed). If you want to get really far into the land of scifi, it’s every conversation I’ve had, including face to face conversations.

For most tasks, the more context that’s available the better the results. In my experience using the best AI tools available to consumers, you can try to capture all of that context in your prompt, talking about history, what’s already been tried, open questions, etc. Or, instead, you can just grab a bunch of relevant documents—emails, Slack threads, forum threads, code snippets, transcripts from calls, etc.—and let the AI work it out for itself, which it’s frighteningly good at.

Clearly there are limits, and there’s a law of diminishing returns here: the first, say, 10-100 documents in context will probably provide the lion’s share of the value compared to the long tail of thousands or millions of pieces of context—and too much context can also damage performance. [NOTE: In practice, what you’d really want here is something like RAG using MCP. The tools for this are getting better rapidly.] But even managing 10-100 documents of context is tricky.

I find myself “printing” out relevant conversations as PDF files, going to great lengths to extract data from platforms that don’t play nice like Slack and Notion (shame on them) and dropping JSON files into my AI chats. Another thing I’ve found that works incredibly well for technical tasks is dropping a ZIP file containing an entire code repository into the chat. This works so well because the model can capably navigate file relationships, imports, and naming conventions holistically rather than one file at a time [NOTE: While I wasn’t using them at the time, modern coding tools like Claude Code and Cursor have solved this problem by maintaining a live, indexed view of an entire repository so you don’t need to do it manually]. When there’s relevant context, I’ve often been blown away by how good the responses are.

But it’s a lot of work, and because context is ephemeral, you have to constantly manage it. The major tools, such as ChatGPT and Claude, have introduced a few features to help with this, such as Projects (a bunch of conversations can share some context) and Memory. However, today these features are rudimentary at best. Many AI tools have recently introduced MCP “connectors” which, if you trust them, give them unfettered access to your Gmail, Google Drive, Slack, etc. Privacy concerns aside (more on this in a moment), this is a step in the right direction.

Long story short: the raw intelligence of the tools I’m using feels sufficient, in general, but they’d be a lot more useful if they could autonomously access relevant information more intelligently. I want to give these tools access to information, obviously in a private, secure fashion, and inject relevant information in the right place at the right time. I also wish I had a better way to manage context across and among all of my active AI conversations, and share it with my team. This is necessary for AI tools to move from novelty to a genuinely indispensable part of my everyday workflow.

Thing #2: Data 📈

LLMs are great conversationalists. They can answer questions, entertain hypotheses, and tell stories. Some people use them as companions. I find that they make great sparring partners, evaluating ideas, poking holes, offering critical, constructive feedback, testing assumptions, etc. They’re good at writing code. But when it comes to doing more than generating words and text, they quickly hit their limits.

There’s a long, long list of other things I’d like AI tools to be able to do on my behalf. The most obvious ones are shopping and planning travel. I hate shopping, and I absolutely hate browsing online product listings (I can’t stand any shopping sites: in my opinion they’re all monstrously designed). AI tools are pretty good at doing basic research and helping me figure out which products to buy, but they’re unable to go the final step and actually complete the purchase. I’m aware that OpenAI last year announced a Stripe partnership and agentic commerce protocol, but this only works with certain, specific vendors, and I have yet to see it actually work.

Planning travel is an even bigger headache. Some trips, the more complicated ones, can take hours to plan. If I’m going somewhere unfamiliar or where I don’t speak or read the language, if I’m booking flights that aren’t a simple roundtrip, if there are multiple destinations, if I’m traveling with other people, etc., the complexity can easily explode. AI tools are fantastic at handling this sort of complexity, and in the abstract they’re very good at travel planning. Enter your preferences and constraints and they can create the most amazing hypothetical itinerary for you. The problems start when it comes to turning those trips into a reality: verifying prices and inventory, and actually booking things.

The tools I use are constantly hamstrung by the fact that they can’t even see realtime availability of things like flights and hotels. They can plan the perfect itinerary, but if the flights and hotels aren’t actually available, or are unreasonably priced, the suggestions are totally useless because they’re not grounded in reality. Again, this seems like such a basic task: checking hotel availability and prices shouldn’t require genius level intelligence. And it doesn’t. But it does require something that AI tools by and large still don’t have access to: friendly interfaces.

The Internet wasn’t designed for AI tools, any more than the built physical world was designed for non-humanoid robots. In fact, it’s far worse than this: the Internet was designed to be actively hostile to bots. The best example of this is the CAPTCHA, which is hostile to both human and non-human users. It’s designed to prevent bots from crawling web pages, and from doing precisely the kind of things we want and need AI agents to do, such as checking prices and availability. Companies use these hostile tools to prevent other companies from taking advantage of their sensitive data. It’s an extremely frustrating workaround to a frustrating problem.

There are a number of AI tools, such as Manus and Operator, which claim to be able to browse arbitrary data on the web, run arbitrary programs inside VMs, etc. I’ve tested a number of these tools, and the results have been pretty poor. In my experience, even the most basic searches for things like flights immediately returns CAPTCHAs and other sorts of challenges. While AI tools are definitely smart enough to solve CAPTCHAs, for some reason these tools frustratingly require you to intervene every time they encounter one. And, because of high latency and low-quality VMs, they’re stymied by even simpler verification checks, such as buttons that require you to click and hold or drag and drop. Even when I stepped in to try to manually solve them, it didn’t work for the same reasons. The experience today is frustratingly limited. [NOTE: The situation has improved somewhat since I wrote this thanks to protocols like MCP, and the proliferation of agent-friendly APIs and CLI tools. But agents still can’t book flights or check hotel prices!]

Fixing this will require nothing short of redesigning and rebuilding the Internet to work for autonomous agents as well as it does for humans, if not primarily for autonomous agents. We’ve seen the first steps in that direction, such as OpenAI’s aforementioned Agentic Commerce Protocol and Coinbase’s x402 protocol. Much more work remains to be done here, and I’m surprised I haven’t yet seen more progress along these lines.

If the first phase of modern AI was about raw intelligence, the next phase should be about interoperability: making everything work well together.

Thing #3: Privacy 👤

I’m a privacy maximalist and I believe that privacy is a fundamental human right. Today, Internet access is also a fundamental human right and, by extension, so is privacy in one’s access to the Internet and its services: browsing, messaging, financial transactions, etc. AI is rapidly eating software and taking over the world. In the same fashion, private access to AI services is, or soon will be, a fundamental human right.

But private access to AI services is, for now, extremely limited. There’s a core tension in how we use AI tools today. On the one hand, in order for them to be truly and maximally useful, they need to have access to basically everything, as described above: all of our sensitive information, all of the tools and services we rely upon. On the other hand, we don’t fully trust them yet, for good reason. We want the benefits of maximal context with the protections of maximal privacy, and the reality today is that these pull in opposite directions.

Yet the privacy landscape in AI tools today is anything but inspiring. Many people naturally use AI tools for extremely personal tasks such as analyzing financial or health records. This means handing sensitive personal data to unaccountable tech giants offering AI products and services. What’s more, those companies are regularly training their models on that user data, profiting from it without sharing any profits with the users. In several high profile cases, they’ve also been compelled to release troves of private chat data in legal cases.

The world described above, where AI tools have access to a huge amount of context—our communications, our calendar, our files, etc.—is an extremely attractive one, but it’s also one that requires extraordinarily robust privacy in order to avoid the most dystopian outcomes. The only surefire way to achieve that degree of privacy today is to run everything locally,

The good news is that, thanks to the rapid improvement in open models, you can do quite a lot locally, at least if you’re a sophisticated user. The bad news is that local, open models are still extremely limited for several reasons. Most obviously, only the very most powerful systems are capable of running the most powerful models, and the vast majority of consumers don’t have access to hardware that’s powerful enough; even purpose-built machines from Apple and Nvidia that cost $10,000 are quite limited in terms of what they can run locally. It’s also technically challenging to set up: take it from me, setting up a local LLM end to end was much more complex than I expected. And even the best tools available for running AI locally today are quite limited compared to what you can do with frontier models. For instance, in my testing, local models struggle to read even medium-sized PDF files.

There’s an alternative: use a private inference tool to run models in a secure cloud, on more powerful systems. The first few viable private AI tools have begun to emerge. These include Venice.ai, which runs on decentralized infrastructure and offers a pretty good privacy model (though it isn’t end to end encrypted); Lumo from Proton, which is end to end encrypted; NEAR AI, which offers full end to end encryption and attestations that inference is running inside a secure enclave, protected from the machine’s operator; and Brave Leo, which offers similar guarantees. Other, larger providers of AI infrastructure, including Microsoft and Google, have announced private AI products, but they’re not yet available for consumer use.

Once these tools are more mature, it should get us around 80% of the way to feature parity with the most powerful, frontier models and tools. But the reality is that these platforms can only ever run open source models, and the frontier AI labs will always keep the most powerful models to themselves, so the most powerful tools will always be the ones available only from those unaccountable tech giants. Open models are improving rapidly but so are the frontier models, which seem to be able to maintain a comfortable 6-8 month lead. Accessing the very most powerful AI tools for now still requires paying a subscription fee and handing your data to the frontier labs.

Truly strong privacy in frontier AI is probably therefore one or two breakthroughs away from really being possible. There are a few promising technologies, most obviously fully homomorphic encryption (FHE), which allows computation on data that remains encrypted. FHE is getting faster and more powerful every year, but it’s still a few years away from being usable for consumer applications. This is why many private AI tools, including the ones mentioned above, made the pragmatic choice to instead use secure enclaves, which provide strong guarantees and very fast performance.

The only alternative I can imagine is a decentralized community somehow developing an AI model that’s as powerful as the ones developed by the frontier labs. This seems unthinkable today, as the centralizers have a big lead, but then again, so did the idea of Linux challenging Microsoft when that project started. Linux lost the desktop war, but won the server market; something similar might still happen in AI.

Context management hits differently when you're running persistent agents. For my setup, the hard part wasn't surfacing the right context. It was maintaining reliable task state between cron runs.

Silent failures where the agent thought a job completed but hadn't. Fixed it with a 4-minute lookback window in cron to catch anything that dropped. Wrote up the broader infrastructure decisions at https://thoughts.jock.pl/p/wizboard-fizzy-ai-agent-interface-pivot-2026 Migrated from a custom task system to an open-source Rails board to reduce surface area. Real-world data access gap you mention is real too. Haven't cracked that one. CAPTCHAs still win.

The execution gap point stuck with me…especially how much of it seems driven by context and tooling rather than intelligence.

In other systems I’ve worked around, more intelligence often just makes things more measurable, not easier to execute — especially when the underlying workflows stay the same.

Curious which constraint you see as primary right now (context, data, or privacy), and whether agents actually fix that or just scale the same friction.